Shibuya is a terminal close to the conference venue, we would recommend booking one of those hotels or any other place close to the station.

Shibuya Tokyu REI Hotel

JR-EAST Hotel Mets SHibuya

HOTEL UNIZO Tokyo Shibuya

| 9:00 am - | Registration |

|---|---|

| 10:30 am - 10:45 am | Welcome |

| 10:45 am - 11:45 am |

Session1: NLOS Imaging Session Chair: Aswin C Sankaranarayanan (CMU)

|

| 12:00 pm - 1:30 pm | Lunch |

| 1:30 pm - 2:30 pm |

Session 2: Imaging with Novel Optics and Sensors Session Chair: Sara Abrahamsson (UCSC)

|

| 2:30 pm - 3:00 pm | Coffee break |

| 3:00 pm - 4:00 pm |

Session 3: Video and High Speed Photography Session Chair: Ashok Veeraraghavan ( Rice University )

|

| 4:00 pm - | Poster & Demo 1 |

| 6:00pm- | Reception at ape cucina naturale (An block 1F) |

| 9:15 am - 10:35 am |

Session 4: 3D Photography Session Chair: Achuta Kadambi (UCLA)

|

|---|---|

| 10:35 am - 11:00 am | Coffee break |

| 11:00 am - 12:00 pm |

Session Chair: Suren Jayasuriya (Arizona State University)

|

| 12:00 pm - 1:30 pm | Lunch |

| 1:30 pm - 2:30 pm |

Session5: Imaging for Microscopy Session Chair: Adithya Kumar Pediredla(Rice University)

|

| 2:30 pm - 3:00 pm | Coffee break |

| 3:00 pm - 4:00 pm |

Session Chair: Hajime Nagahara (Osaka University)

|

| 4:00 pm - | Poster & Demo 2 |

| 7:00pm- | Banquet at Gonpachi Shibuya (E. Space Tower 14F) |

| 9:30 am - 10:30 am |

Session6: Reconstruction beyond 3D Session Chair: Oliver S. Cossairt (Northwestern University)

|

|---|---|

| 10:30 am - 11:00 am | Coffee break |

| 11:00 am - 12:00 pm |

Session Chair: Sanjeev Koppal (University of Florida)

|

| 12:00 pm - 1:30 pm | Lunch |

| 1:30 pm - 2:50 pm |

Session 7: High-Performance / High Dynamic Range Photography Session Chair: Mark Sheinin (Technion)

|

| 2:50 pm - 3:20 pm | Concluding remarks and awards ceremony |

| Day1 & Day2 (15th-16th May) |

|---|

1. Joint optimization for compressive video sensing and reconstruction under hardware constraints |

2. Local Zoo Animal Stamp Rally Application using Image Recognition |

3. Reproduction of Takigi Noh Based on Anisotropic Reflection Rendering of Noh Costume with Dynamic Illumination |

4. Super Field-of-View Lensless Camera by Coded Image Sensors |

5. FlashFusion: Real-time Globally Consistent Dense 3D Reconstruction using CPU Computing |

6. Slope Disparity Gating using a Synchronized Projector-Camera System |

7. Episcan360: Active Epipolar Imaging for Live Omnidirectional Stereo |

8. Cinematic Virtual Reality with Head-Motion Parallax |

9. Coded Two-Bucket Cameras for Computer Vision |

10. A single-photon camera with 97 kfps time-gated 24 Gphotons/s 512 x 512 SPAD pixels for computational imaging and time-of-flight vision |

| Day1 (15th May) | Day2 (16th May) |

|---|---|

1. RESTORATION OF FOGGY AND MOTION-BLURRED ROAD SCENES |

2. Light-field camera based on single-pixel camera type acquisitions |

3. Improving Animal Recognition Accuracy using Deep Learning |

4. Player Tracking in Sports Video using 360 degree camera |

5. A Generic Multi-Projection-Center Model and Calibration Method for Light Field Cameras |

6. Improved Illumination Correction that Preserves Medium Sized Objects |

7. Dense Light Field Reconstruction from Sparse Sampling Using Residual Network |

8. Full View Optical Flow Estimation Leveraged from Light Field Superpixel |

9. Functional CMOS Image Sensor with flexible integration time setting among adjacent pixels |

10. Focus Manipulation Detection via Photometric Histogram Analysis |

11. Learning-Based Framework for Capturing Light Fields through a Coded Aperture Camera |

12. A Dataset for Benchmarking Time-Resolved Non-Line-of-Sight Imaging |

13. Skin-based identification from multispectral image data using CNNs |

14. A Bio-inspired Metalens Depth Sensor |

15. A method for passive, monocular distance measurement of virtual image in VR/AR |

16. Polarimetric Camera Calibration Using an LCD Monitor |

17. Ellipsoidal path connections for time-gated rendering |

18. Moving Frames Based 3D Feature Extraction of RGB-D Data |

19. Diffusion Equation Based Parameterization of Light Field and Its Computational Imaging Model |

20. Ray-Space Projection Model for Light Field Camera |

21. Refraction-free Underwater Active One-shot Scan using Light Field Camera |

22. Speckle Based Pose Estimation Considering Depth Information for 3D Measurement of Texture-less Environment |

23. Acceleration of 3D Measurement of Large Structures with Ring Laser and Camera via FFT-based Template Matching |

24. Unifying the refocusing algorithms and parameterizations for traditional and focused plenoptic cameras |

25. DeepToF: Off-the-Shelf Real-Time Correction of Multipath Interference in Time-of-Flight Imaging |

26. GigaVision: When Gigapixel Videography Meets Computer Vision |

27. Light Field Messaging with Deep Photographic Steganography |

28. Non-blind Image Restoration Based on Convolutional Neural Network |

29. Gradient-Based Low-Light Image Enhancement |

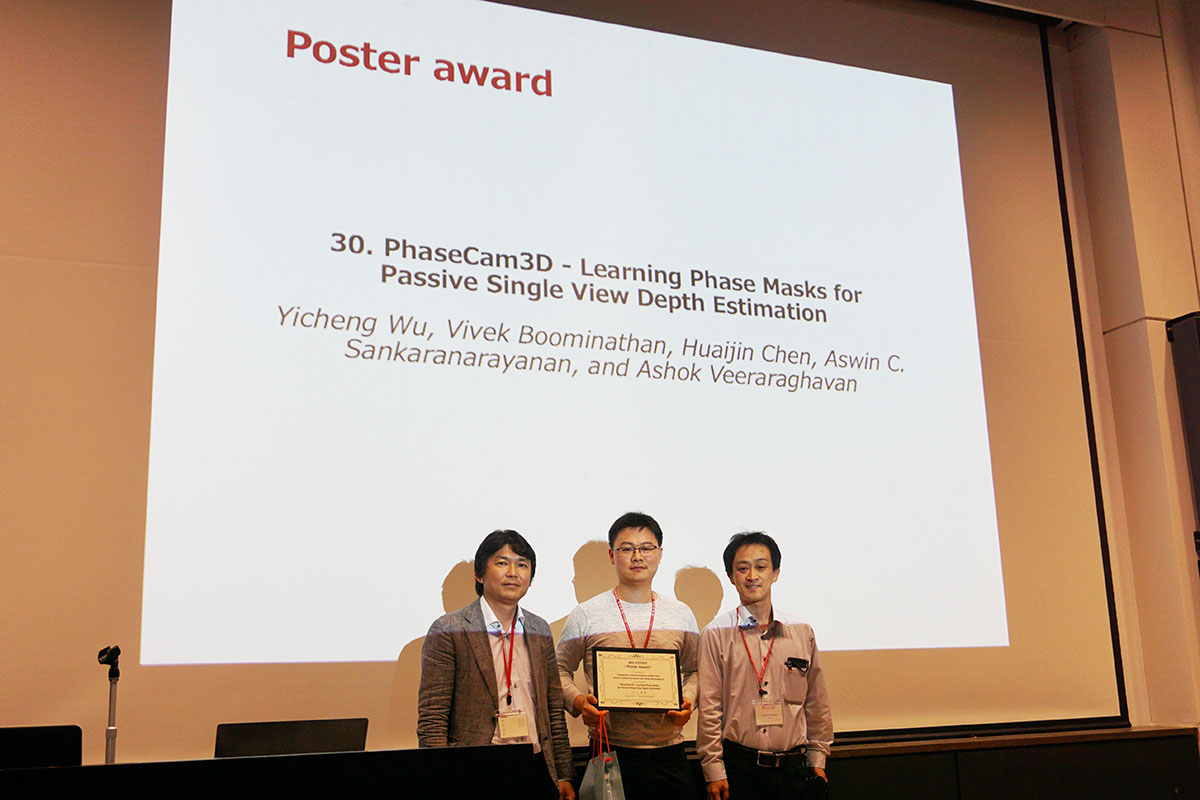

30. PhaseCam3D - Learning Phase Masks for Passive Single View Depth Estimation |

31. Hyperspectral Imaging with Random Printed Mask |

32. Wood Pixels for Near-Photorealistic Parquetry |

33. CloudCT: Spaceborne scattering tomography by a large formation of small satellites for improving climate predictions |

34. Hybrid optical-electronic convolutional neural networks with optimized diffractive optics for image classification |

35. Reflective and Fluorescent Separation under Narrow-Band Illumination |

|

36. Beyond Volumetric Albedo --- A Surface Optimization Framework for Non-Line-of-Sight Imaging |

|

37. Light Structure from Pin Motion: Simple and Accurate Point Light Calibration for Physics-based Modeling |

38. Lunar surface image restoration using U-Net based deep neural networks |

39. A Theory of Fermat Paths for Non-Line-of-Sight Shape Reconstruction |

40. Learning to Separate Multiple Illuminants in a Single Image |

42. Adaptive Lighting for Data Driven Non-line-of-sight 3D Localisation |

|

43. Directionally Controlled Time-of-Flight Ranging for Mobile Sensing Platforms |

44. 360 Panorama Synthesis from Sparse Set of Images with Unknown FOV |

45. Classification and Restoration of Compositely Degraded Images using Deep Learning |

46. Programmable spectrometry -- per-pixel material classification using learned filters |

47. SNLOS: Non-line-of-sight Scanning through Temporal Focusing |

|

48. STORM: Super-resolving Transients by OveRsampled Measurements |

|

49. Thermal Non-Line-of-Sight Imaging |

50. Spatio-temporal Phase Disambiguation in Depth Sensing |

51. Depth From Texture Integration |

|

52. Mirror Surface Reconstruction Using Polarization Field |

| Day1 (15th May) |

|---|

1. RESTORATION OF FOGGY AND MOTION-BLURRED ROAD SCENES |

3. Improving Animal Recognition Accuracy using Deep Learning |

5. A Generic Multi-Projection-Center Model and Calibration Method for Light Field Cameras |

7. Dense Light Field Reconstruction from Sparse Sampling Using Residual Network |

9. Functional CMOS Image Sensor with flexible integration time setting among adjacent pixels |

11. Learning-Based Framework for Capturing Light Fields through a Coded Aperture Camera |

13. Skin-based identification from multispectral image data using CNNs |

15. A method for passive, monocular distance measurement of virtual image in VR/AR |

17. Ellipsoidal path connections for time-gated rendering |

19. Diffusion Equation Based Parameterization of Light Field and Its Computational Imaging Model |

21. Refraction-free Underwater Active One-shot Scan using Light Field Camera |

23. Acceleration of 3D Measurement of Large Structures with Ring Laser and Camera via FFT-based Template Matching |

25. DeepToF: Off-the-Shelf Real-Time Correction of Multipath Interference in Time-of-Flight Imaging |

27. Light Field Messaging with Deep Photographic Steganography |

29. Gradient-Based Low-Light Image Enhancement |

31. Hyperspectral Imaging with Random Printed Mask |

33. CloudCT: Spaceborne scattering tomography by a large formation of small satellites for improving climate predictions |

37. Light Structure from Pin Motion: Simple and Accurate Point Light Calibration for Physics-based Modeling |

39. A Theory of Fermat Paths for Non-Line-of-Sight Shape Reconstruction |

43. Directionally Controlled Time-of-Flight Ranging for Mobile Sensing Platforms |

45. Classification and Restoration of Compositely Degraded Images using Deep Learning |

47. SNLOS: Non-line-of-sight Scanning through Temporal Focusing |

48. STORM: Super-resolving Transients by OveRsampled Measurements |

49. Thermal Non-Line-of-Sight Imaging |

| Day2 (16th May) |

2. Light-field camera based on single-pixel camera type acquisitions |

4. Player Tracking in Sports Video using 360 degree camera |

6. Improved Illumination Correction that Preserves Medium Sized Objects |

8. Full View Optical Flow Estimation Leveraged from Light Field Superpixel |

10. Focus Manipulation Detection via Photometric Histogram Analysis |

12. A Dataset for Benchmarking Time-Resolved Non-Line-of-Sight Imaging |

14. A Bio-inspired Metalens Depth Sensor |

16. Polarimetric Camera Calibration Using an LCD Monitor |

18. Moving Frames Based 3D Feature Extraction of RGB-D Data |

20. Ray-Space Projection Model for Light Field Camera |

22. Speckle Based Pose Estimation Considering Depth Information for 3D Measurement of Texture-less Environment |

24. Unifying the refocusing algorithms and parameterizations for traditional and focused plenoptic cameras |

26. GigaVision: When Gigapixel Videography Meets Computer Vision |

28. Non-blind Image Restoration Based on Convolutional Neural Network |

30. PhaseCam3D - Learning Phase Masks for Passive Single View Depth Estimation |

32. Wood Pixels for Near-Photorealistic Parquetry |

34. Hybrid optical-electronic convolutional neural networks with optimized diffractive optics for image classification |

35. Reflective and Fluorescent Separation under Narrow-Band Illumination |

36. Beyond Volumetric Albedo --- A Surface Optimization Framework for Non-Line-of-Sight Imaging |

38. Lunar surface image restoration using U-Net based deep neural networks |

40. Learning to Separate Multiple Illuminants in a Single Image |

42. Adaptive Lighting for Data Driven Non-line-of-sight 3D Localisation |

44. 360 Panorama Synthesis from Sparse Set of Images with Unknown FOV |

46. Programmable spectrometry -- per-pixel material classification using learned filters |

50. Spatio-temporal Phase Disambiguation in Depth Sensing |

51. Depth From Texture Integration |

52. Mirror Surface Reconstruction Using Polarization Field |

[Extended] Dec. 17, 2018 (PM23:59 PST) |

Paper submission deadline |

|---|---|

| Dec. 17, 2018 (PM23:59 PST) | Supplemental material due |

| Feb. 11-15, 2019 | Rebuttal period |

| Feb. 26, 2019 | Paper decisions |

[Extended] April. 5, 2019 |

Camera ready versions |

[Extended] Mar. 29, 2019 (PM23:59 PST) |

Posters/demos deadline |

|---|---|

| Apr. 5, 2019 | Posters/demos decision |

| [Extended]April 22 (PM23:59 JST) | Advance registration ends |

|---|---|

| May 17 | Late registration ends |

| May 15-17 | ICCP2019 |

| Registration type | [Extended] Before April 22 (PM23:59 JST) |

After April 22 (PM23:59 JST) |

|---|---|---|

| IEEE Student Member | 15,000 JPY | 21,000 JPY |

| IEEE Member | 25,000 JPY | 35,000 JPY |

| Student Non-Member | 21,000 JPY | 27,000 JPY |

| Non-Member | 35,000 JPY | 45,000 JPY |

| IEEE Life Member | 15,000 JPY | 15,000 JPY |

IEEE International Conference on Computational Photography (ICCP 2019) seeks high quality submissions in all areas related to computational photography. The field of computational photography seeks to create new photographic and imaging functionalities and experiences that go beyond what is possible with traditional cameras and image processing tools. The IEEE International Conference on Computational Photography is organized with the vision of fostering the community of researchers, from many different disciplines, working on computational photography. We welcome all submissions that introduce new ideas to the field including, but not limited to, those in the following areas:

We are now accepting poster and demo submissions. Whereas ICCP papers must describe original research, posters and demos give an opportunity to showcase previously published or yet-to-be published work to a larger community. Submissions should be emailed to . More details below.

ICCP brings together researchers and practitioners from the multiple fields that computational photography intersects: computational imaging, computer graphics, computer vision, optics, art, and design. We therefore invite you to present your work to this broad audience during the ICCP posters session. Whereas ICCP papers must describe original research, the posters and demos give an opportunity to showcase previously published or yet-to-be published work to a larger community. Specifically, we seek posters presenting:

In addition to posters, we also welcome:

The list of accepted and presented Posters and Demos are announced on our conference website, which serves as a record of presentation.

Submissions should include one or more paragraphs describing the proposed poster/demo/artwork, as well as author names and affiliations. We strongly encourage you to submit supporting materials, such as published papers, images or other media, videos, demos, and websites describing the work. Attachments under 5 MB are accepted. Otherwise, please provide a URL, or use an attachment delivery service like Dropbox or Hightail. Please email your submission directly to .

Proposal submission deadline: [Extended] March 29th, 2019 Proposal acceptance notification: Apr. 5th, 2019

The total duration of each talk (both regular paper and invited) is 20 minutes, including 3 minutes for Q&A. The presenter(s) should plan to speak for no more than 17 minutes; please be mindful of finishing your presentation within the designated time slot. You should bring your own laptop for your presentation. The projector will have HMDI and D-sub connectors.

We do not provide any display adapters. If you need specific display adapters (e.g., usb-c to HMDI or D-sub etc), please remember to bring your own. The projector do not support higher resolution, so please set the resolution and refresh rate to the standard one.

Please introduce yourself to the session chair at least 10 minutes before the start of your session, and test the laptop-projector connection at the podium.

The poster format is A0 portrait. Adhesive material and/or pins will be provided for mounting the posters to the boards. If you have special requirements, please contact the ICCP 2019 Demo/Poster Chairs (demopostersiccp2019@gmail.com) as soon as possible. We will try to accommodate your requests as much as possible.

Odd poster numbers are allocated for presentation on 15th May.

Even poster numbers are allocated for presentation on 16th May.

Poster presenters can install their posters anytime prior to the poster session on the corresponding date.

The demo booth dimension is roughly 1800mm x 1800mm. Two of 1530mm x 890mm (H x W) panels will be provided for each demo to place posters and other materials. Adhesive material and/or pins will be provided for mounting the posters to the boards. If you have special requirements, please contact the ICCP 2019 Demo/Poster Chairs (demopostersiccp2019@gmail.com) as soon as possible. We will try to accommodate your requests as much as possible.

Demo presentations will take place on both 15th and 16th May.

Demo presenters can install their demos from 9:30 on 15th May, and leave the installation over the night for the presentation on 16th (the presentation hall will be locked overnight). The demos may be uninstalled on 17th.

| Platinum | Gold | Silver | Bronze | |

|---|---|---|---|---|

| Full Admissions (free registrations) | 4 | 3 | 2 | 1 |

| Demo table (if requested) | 1 | 1 | 1 | 1 |

| Logo on website and all printed materials | ||||

| Special recognition during banquet and other ceremonies | ||||

| Customized events | * | - | - | - |

| Costs (Incl. sales tax. US Dollar or Japanese Yen) | 10,000USD1,100,000JPY | 7,500USD825,000JPY | 5,000USD550,000JPY | 2,500USD275,000JPY |

Equity, Diversity, and Inclusion are central to the goals of the IEEE Computer Society and all of its conferences. Equity at its heart is about removing barriers, biases, and obstacles that impede equal access and opportunity to succeed. Diversity is fundamentally about valuing human differences and recognizing diverse talents. Inclusion is the active engagement of Diversity and Equity.

A goal of the IEEE Computer Society is to foster an environment in which all individuals are entitled to participate in any IEEE Computer Society activity free of discrimination. For this reason, the IEEE Computer Society is firmly committed to team compositions in all sponsored activities, including but not limited to, technical committees, steering committees, conference organizations, standards committees, and ad hoc committees that display Equity, Diversity, and Inclusion.

IEEE Computer Society meetings, conferences and workshops must provide a welcoming, open and safe environment, that embraces the value of every person, regardless of race, color, sex, sexual orientation, gender identity or expression, age, marital status, religion, national origin, ancestry, or disability. All individuals are entitled to participate in any IEEE Computer Society activity free of discrimination, including harassment based on any of the above factors.

IEEE believes that science, technology, and engineering are fundamental human activities, for which openness, international collaboration, and the free flow of talent and ideas are essential. Its meetings, conferences, and other events seek to enable engaging, thought provoking conversations that support IEEE’s core mission of advancing technology for humanity. Accordingly, IEEE is committed to providing a safe, productive, and welcoming environment to all participants, including staff and vendors, at IEEE-related events.

IEEE has no tolerance for discrimination, harassment, or bullying in any form at IEEE-related events. All participants have the right to pursue shared interests without harassment or discrimination in an environment that supports diversity and inclusion. Participants are expected to adhere to these principles and respect the rights of others.

IEEE seeks to provide a secure environment at its events. Participants should report any behavior inconsistent with the principles outlined here, to on site staff, security or venue personnel, or to eventconduct@ieee.org